|

6/20/2023 0 Comments Airflow xcom delete

V1PodAffinity ( required_during_scheduling_ignored_during_execution = ), topology_key = ". V1NodeAffinity ( preferred_during_scheduling_ignored_during_execution = ) ] ), ) ] ), pod_affinity = k8s. V1Container ( name = "init-container", image = "ubuntu:16.04", env = init_environments, volume_mounts = init_container_volume_mounts, command =, args =, ) affinity = k8s. V1ContainerPort ( name = "http", container_port = 80 ) init_container_volume_mounts = init_environments = init_container = k8s. V1PersistentVolumeClaimVolumeSource ( claim_name = "test-volume" ), ) port = k8s. V1Volume ( name = "test-volume", persistent_volume_claim = k8s. V1VolumeMount ( name = "test-volume", mount_path = "/root/mount_file", sub_path = None, read_only = True ) configmaps = volume = k8s. Value = f"/", "")Īnd that's it! Now you can use this custom xcom implementation in your dags and modify the serialization function to your needs, for example you can add support for numpy arrays or any other format that you need.Secret_file = Secret ( "volume", "/etc/sql_conn", "airflow-secrets", "sql_alchemy_conn" ) secret_env = Secret ( "env", "SQL_CONN", "airflow-secrets", "sql_alchemy_conn" ) secret_all_keys = Secret ( "env", None, "airflow-secrets-2" ) volume_mount = k8s. AIRFLOW-1698 Remove SCHEDULERRUNS env var in systemd AIRFLOW-1694 Stop using itertools. Otherwise, it will create an S3 hook, serialize it to a pickle format, upload to S3 and in the end, only the S3 path is returned from the task.

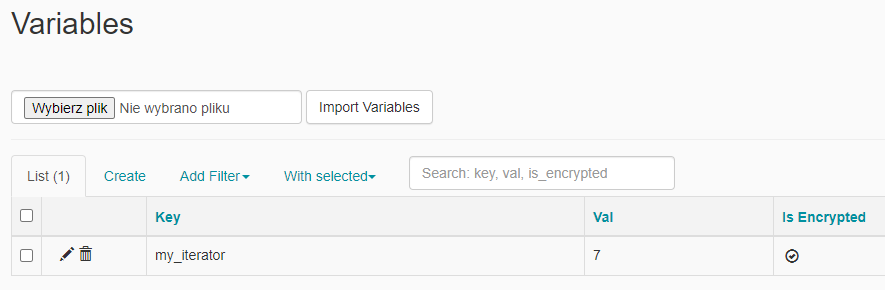

In the serialization method, we will first check if the value is an instance of pandas DataFrame, if not, it can just return it. But you will see that this method can be easily extended to other ones as well. In this implementation, we will limit ourselves only to pandas DataFrames while keeping backward compatibility for anything else. As the name suggests, one will be used to serialize variables into XCom-compatible format and another one to retrieve it. Now we need to implement two static methods, serialize_value and deserialize_value. Iuliia Volkova Follow Published in Analytics Vidhya 4 min read - 2 Some time ago I was asked by one of my colleges on not difficult question. Then let's create a new class, subclassing the original BaseXCom, we also add two variables to it, we will get to them later class S3XComBackend(BaseXCom):īUCKET_NAME = os.environ.get("S3_XCOM_BUCKET_NAME") Let's start by importing everything we will need: import osįrom .hooks.s3 import S3Hook In Airflow, you have an option to create your own XCom implementation. By being able to exchange small data frames between the tasks, their roles can be nicely isolated and if there is an error in the processing, we have visibility into the data for troubleshooting. For instance, the first task might create the DataFrame from records in the external database (that is not managed by us), send it to a second one and finally, the third one might send us a report. That being said, at Pilotcore we find that it's handy to be able to exchange data between tasks that are sometimes a little bigger than just 64 KB. It's good to mention that Airflow is not designed for heavy data processing, for that use case, you could be better off with a specialized tool like Spark. Push return code from bash operator to XCom. Push and pull from other Airflow Operator than pythonOperator. Airflow Push and pull same ID from several operator. From left to right, The key is the identifier of your XCom. Learning Airflow XCom is no trivial, So here are some examples based on use cases I have personaly tested: Basic push/pull example based on official example. You can think of an XCom as a little object with the following fields: that is stored IN the metadata database of Airflow. secrets (.Secret) Kubernetes secrets to inject in the container, They can be exposed as environment vars or files in a volume. If the task is unmapped, all XComs matching this task ID in the same DAG run are. Yes XComs What is an Airflow XCom XCom stands for cross-communication and allows to exchange messages or small amount of data between tasks. clearxcomdata (session NEWSESSION) source Clear all XCom data from the database for the task instance. That's why they, in the default form, can't be used to send and retrieve data frames or other bigger storage types. pooloverride (str None) Use the pooloverride instead of task’s pool. Actually, the size limit will differ depending on your backend: They can have any (serializable) value, however, they are designed to handle only very small data. In Airflow, XComs (short for "cross-communications") are a mechanism that lets tasks talk to exchange data between themselves.Īn XCom is identified by a key (essentially its name), as well as the task_id and dag_id it came from.

Want to get up and running fast in the cloud? Contact us today.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed